A lot of teams implement AI, feel like they're moving faster for a week, and then wonder why nothing critical seems to be moving. The drafts are faster. The summaries are instant. The first pass gets done in half the time.

But the launch still stalls. The handoff still breaks. The same questions keep showing up in Slack. Someone still has to chase approvals, explain context again, and work out who actually owns the next step.

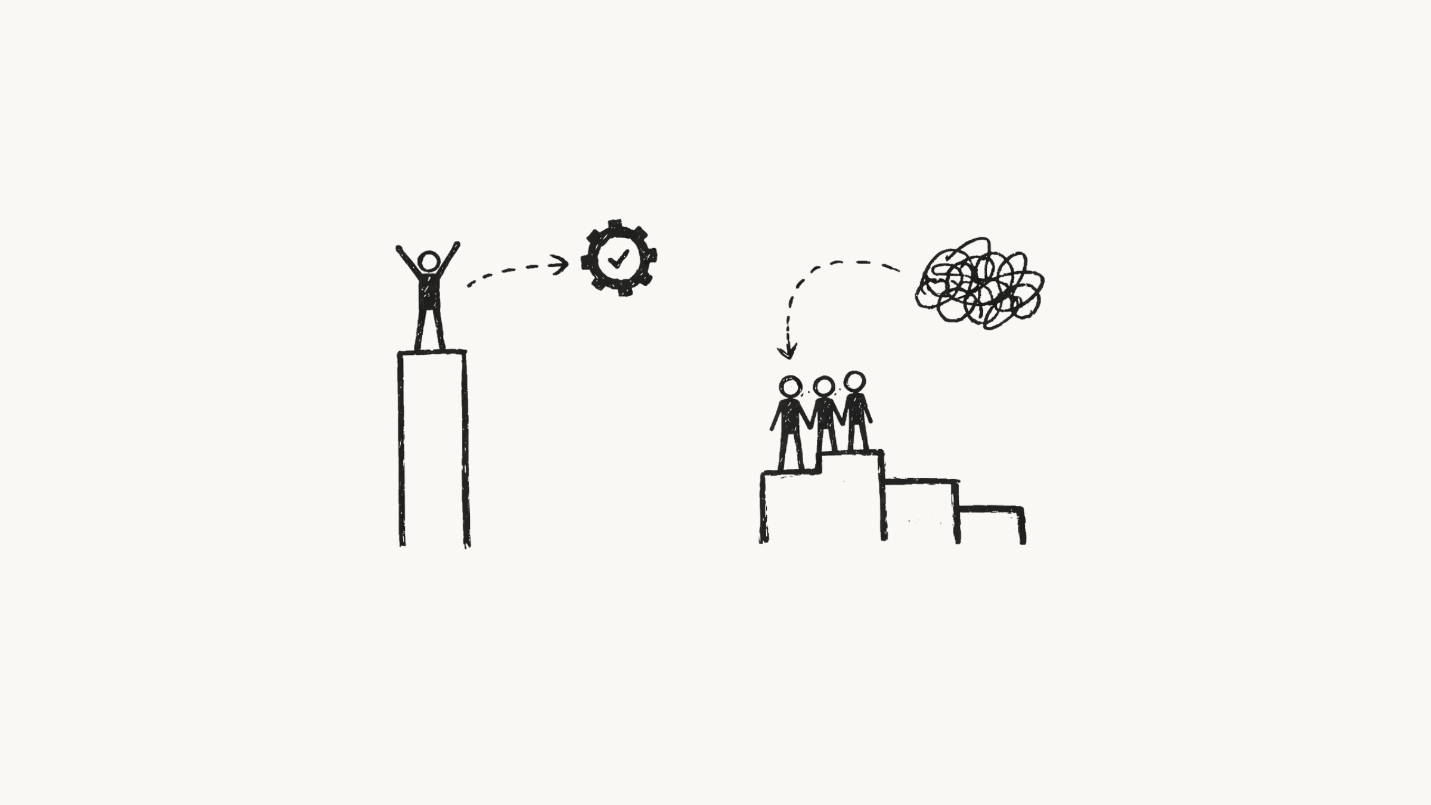

That's what this article is about. AI can make one person faster very quickly. It does not automatically make the team move faster.

That gap is starting to show up in the broader research too. A Microsoft report found that AI is delivering real productivity gains, but that speed alone is not enough; companies must rethink the workflow itself, not just add AI on top of existing work.

In small SaaS teams especially, the bottleneck usually isn't the writing. It's the coordination around the work.

Key Takeaways

- Individual AI wins do not automatically translate into team performance.

- As teams grow, coordination drag becomes the real bottleneck.

- Faster output often creates more loose ends if ownership, context, and approvals are still messy.

- Most productivity tools speed up tasks but leave the handoffs untouched.

- Stable workflows reduce follow-ups, context chasing, and rework.

- The real unlock is not more AI in more tools. It is dependable execution across the team.

AI can speed up one person. It doesn't automatically speed up the team.

Most AI tools are sold through individual demos.

One person makes a suggestion. One artifact is generated. Everyone nods. This looks like productivity.

But team performance isn't the same as personal speed. If one person can now produce work twice as fast, but the next step still depends on missing context, a delayed approval, or an unclear handoff, the team doesn't move faster. It just accumulates work faster.

If an SDR drafts 50 personalised emails in an hour but the CRM routing breaks halfway through the sequence, that isn't throughput. It's a faster way to create operational mess.

The distinction matters because leadership often buys tools based on individual demos. A founder sees a marketing manager summarise a call transcript in real time and assumes the go-to-motion engine is now optimised. It isn't.

The summary sits in a Slack channel. The engineer doesn't see it. The product owner doesn't know the priority. The work lands in a void. Individual AI improves task speed. Team performance improves when that speed is absorbed by stable operating workflows.

Research points to model capability being high, but integration reliability varies. This variance is where team performance dies. You don't need smarter prompts. You need tighter handoffs. The goal is not to make one person faster. It's to make the chain of work more reliable.

When one link speeds up and the next link stays slow, inventory builds up. In knowledge work, inventory is unfinished tickets, unacknowledged messages, and ambiguous requirements. This is why individual AI productivity vs team performance remains skewed in most growth-stage organisations.

Coordination drag is what actually slows teams down

As SaaS teams grow, the work between the work starts to dominate. Not the headline tasks. The invisible ones. The follow-ups. The re-summaries. The "quick clarifications." The status pings. The handoffs that should have been clean but weren't.

This is coordination drag. It's the hidden work that makes teams feel busy while actual delivery slows down. The missing layer isn't more prompts or more tools. It's workflow stability: clear ownership, captured context, and reliable handoffs that keep work moving without constant chasing.

This gets misdiagnosed as a people problem all the time. It usually isn't. The team isn't slow. The workflow is noisy. Slack is full of this fake work: chasing, clarifying, nudging, summarising, re-triaging. Add AI into that environment without fixing the workflow and you don't remove the noise. You speed it up.

Faster output just creates more fragments to reconcile unless someone has already solved ownership, timing, and context. That's why AI doesn't really remove the coordination cost. It just moves it.

Instead of waiting for someone to write the update, you wait for someone to verify the AI update because the context got lost somewhere between tools. The bottleneck isn't usually technical. It's operational. It's the gap between generation and execution.

Until that gap is fixed, you're not automating the workflow. You're automating confusion.

A fast AI workflow can still quietly break revenue work

Take a simple example.

A product manager uses AI to turn customer call notes into CRM updates and follow-up tasks in minutes instead of hours. On paper, that looks like a win. The PM is faster. The notes are richer. The tasks appear instantly.

Then a CRM field name changes. Routing breaks. Nobody notices. Nobody clearly owns the workflow. For two weeks, follow-ups never reach the right queue.

The PM got faster. The team didn't. Revenue work stalled quietly because nothing in the workflow was checking for drift, preserving context, or escalating failure when something broke.

This is what makes these failures so expensive. The speed gain is real. The breakdown is just delayed. Engineering ends up rebuilding the integration because the schema changed. Sales manually reassigns tasks because the owner field is empty. The PM apologises because the notification never fired and the handoff never happened. The AI did its part. The team still paid for the failure.

The difference between automation and stability

Most AI tools optimise for output. Ayven is built around a different assumption: output is not the hard part. Keeping work moving is.

That means fewer dropped handoffs, fewer follow-ups, less context chasing, and less work stalling because the wrong person was offline at the wrong moment.

The point is not "more AI." It's dependable help that still works when the workflow gets messy. That's where governance becomes operational, not theoretical. A report by OneTrust found that 75% of respondents said AI exposed the limitations of legacy governance processes, and 73% said it revealed gaps in visibility, collaboration, and policy enforcement.

That doesn't mean every team needs a new layer tomorrow. It does mean that if ownership is fuzzy, handoffs are weak, and timing is implicit, adding more tools is unlikely to fix much.

This is the difference between automation and stability. Automation moves data. Stability makes sure it lands in the right place, at the right time, with the right owner. Automation can trigger a workflow. Stability makes sure the workflow actually survives contact with reality.

That's the layer most teams are missing. It's what stops the endless questions: Who owns this? Can you resend that? Why didn't this move? Are we still waiting on approval? A stable workflow turns scattered context into accountable action. It knows when something can move, when it should wait, and when a human needs to step in.

That's the real difference between a script that runs and a process that delivers. And in a distributed team, that difference matters more than speed. A slow reliable flow beats a fast broken one every time.

What teams can do now

You do not need a six-month transformation programme to improve this.

Start by fixing the parts of the workflow that leak the most momentum.

- Map ownership clearly. Every automated task needs a named owner for the next step.

- Define failure states. Decide what happens when routing breaks, data drifts, or approvals do not arrive.

- Capture context once. Put the decision and the reason somewhere durable.

- Set timing rules. If someone is offline, the workflow should escalate instead of waiting forever.

- Review handoffs. Look at the last ten automated handoffs. Where did they stall, bounce, or need manual cleanup?

These steps are about reducing coordination drag, not producing more activity. You're not trying to generate more tickets. You're trying to close more loops. That's the difference.

The goal isn't to make one person look faster. It's to make the work keep moving across the team.

What a stability layer does not solve

A stability layer is not magic. It adds checks. It adds verification. Sometimes it adds latency. That's the trade-off.

You cannot remove all friction without increasing risk. And if you add too many gates, you kill the benefit.

It also doesn't solve the wrong problems.

- It won't fix a bad product strategy.

- It won't repair a team that doesn't trust the workflow.

- It won't replace judgment.

- And it won't save a process that nobody actually follows.

If the team keeps bypassing the system, the workflow is not stable. It is optional. That part still has to be earned.

Ayven can help make execution more reliable. It cannot supply the intent, the trust, or the decision-making for you. Humans still have to define the logic. Humans still have to decide where risk is acceptable. Humans still have to step in when the stakes are high.

That isn't a flaw. It's the point. Stability costs time. Chaos costs more.

Moving from local wins to team momentum

Designing for reliability instead of raw speed is how local AI wins turn into team momentum.

If AI has made parts of your team faster but delivery still feels stuck, the missing layer is probably not another tool. It is workflow stability.

Start with the handoffs. That is usually where the drag is hiding.

AI can make one person faster.

Team performance improves when the workflow stops leaking momentum.

That is the layer we are building at Alknoma.